MTC: AI Voice Cloning, Deepfake Fraud, and Crime Junkie: What Lawyers Must Learn Now ⚖️🧠

/As a tech-savvy and ethically compliant lawyer, are you prepared to handle an ai voice-call scam?

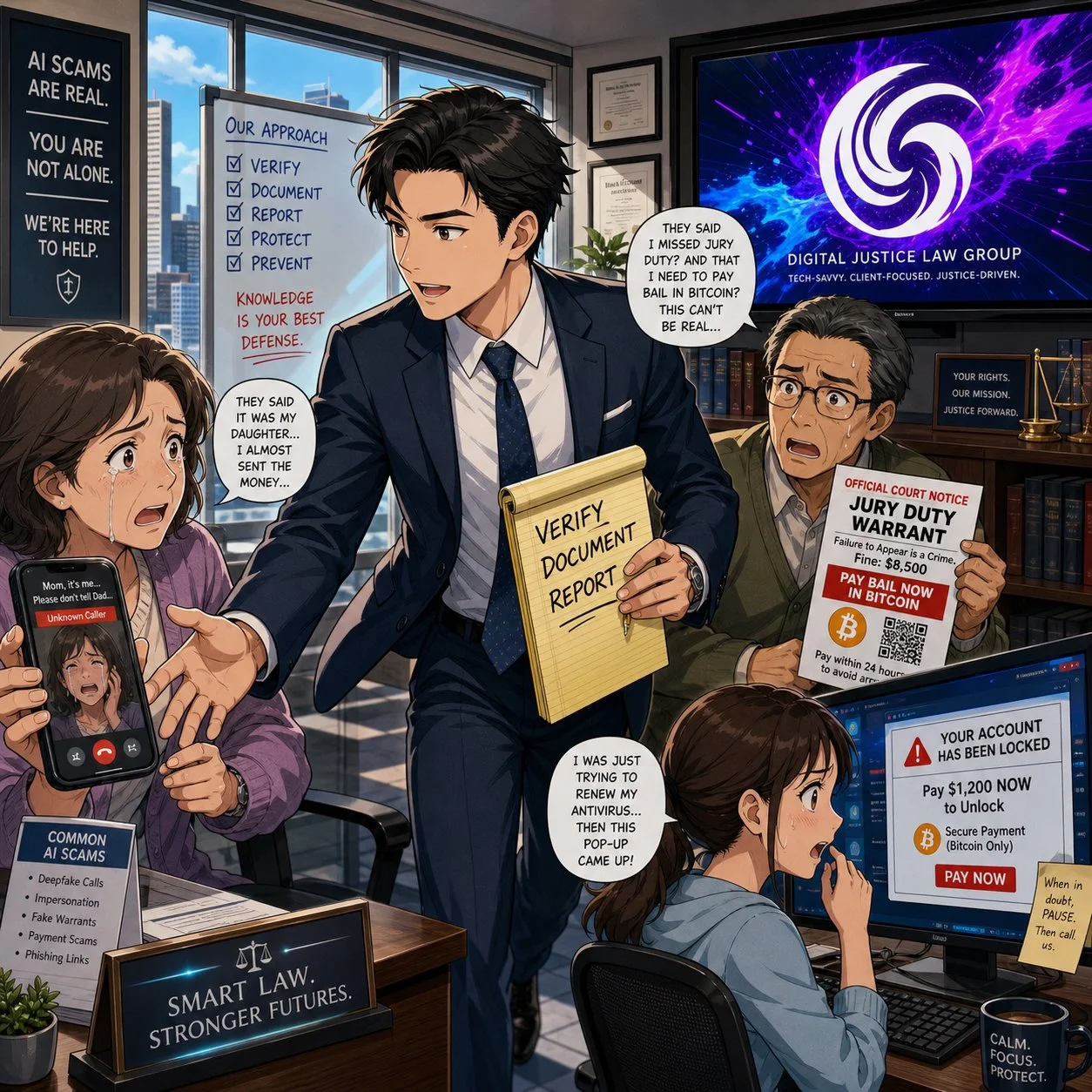

We live in a world where a client can hear their child scream for help over the phone, know that voice down to the quiver in their sobs, and still be wrong about what’s real. At the same time, lawyers are getting “official” calls from spoofed sheriff’s offices demanding Bitcoin bail payments that feel just plausible enough to pass the sniff test. If you think your clients are the only ones at risk, you’re already behind.

As a long-time Crime Junkie fan, I’m grateful to Ashley Flowers, Brit Prawat, and the Audiochuck team for doing something the legal profession hasn’t always done well: translating complex, evolving tech crime into stories real people understand. Their recent warnings about AI voice cloning, virtual kidnappings, and sophisticated online scams are more than compelling podcast episodes—they’re mandatory listening for lawyers who care about their clients, their firms, and their own digital safety.

In this editorial, I want to bridge those Crime Junkie stories into practical takeaways for solo and small-firm lawyers, AI‑curious practitioners, and even tech‑skeptical colleagues. We’ll look at how these scams work, how the ABA Model Rules already expect you to understand enough technology to spot them, and how to turn “true crime” lessons into concrete safeguards for your practice. ⚙️

When Your Ears Can’t Be Trusted: AI Voice Cloning and Virtual Kidnappings 🎙️

In “WARNING: AI Voice Cloning and Virtual Kidnappings,” Crime Junkie walks us through a terrifying call to a mother who hears her daughter sobbing, begging for her life, while a man demands a ransom and lays out graphic threats. The twist, as many of us now know, is that the daughter is safe; the “kidnappers” are using AI‑cloned audio drawn from a tiny sample of her voice to weaponize panic.

Researchers cited in the episode describe how low‑cost AI tools can create a convincing voice clone from as little as three seconds of audio. Caller ID spoofing then makes it look like the call is coming from the victim’s phone, while scammers press for fast, untraceable payments in cash, gift cards, or crypto. The technology is cheap, the scripts are refined, and the goal is simple: override your critical thinking before you can verify anything.

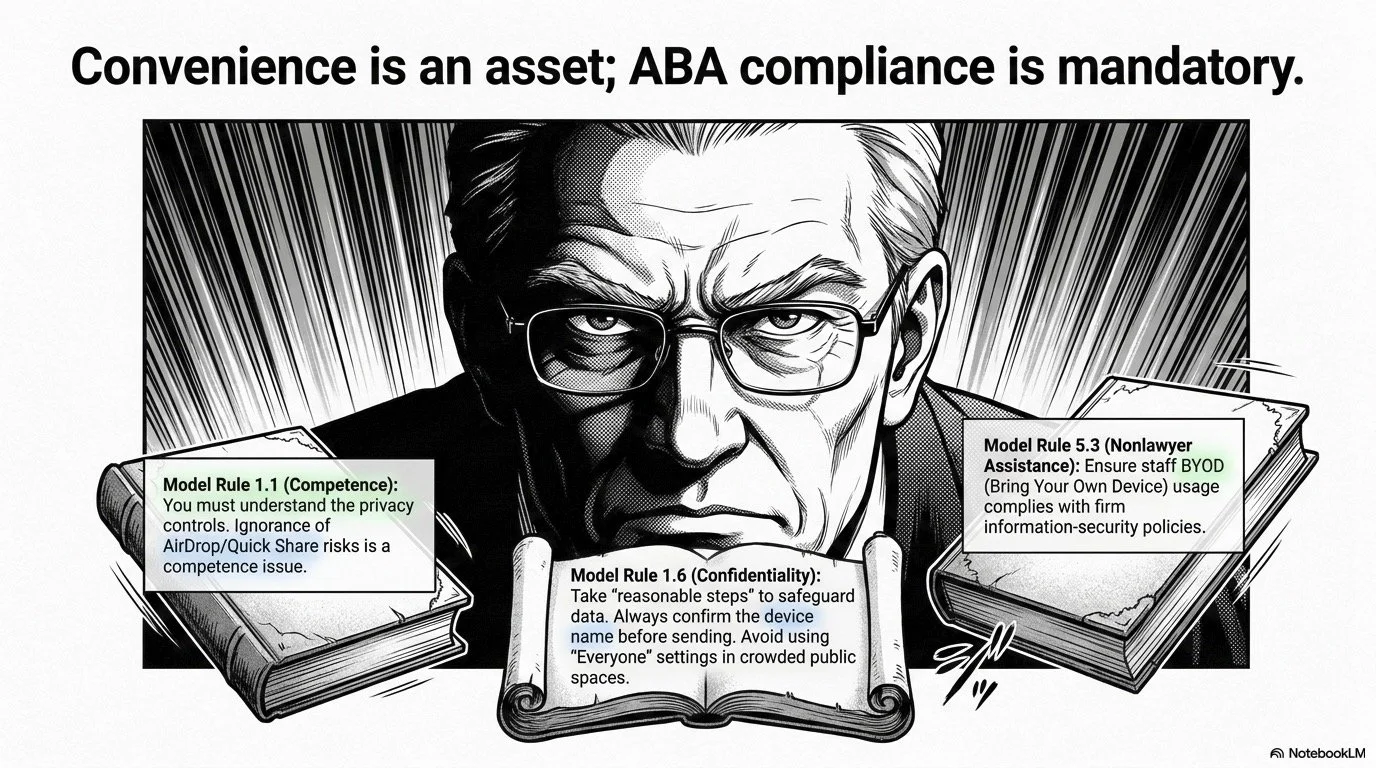

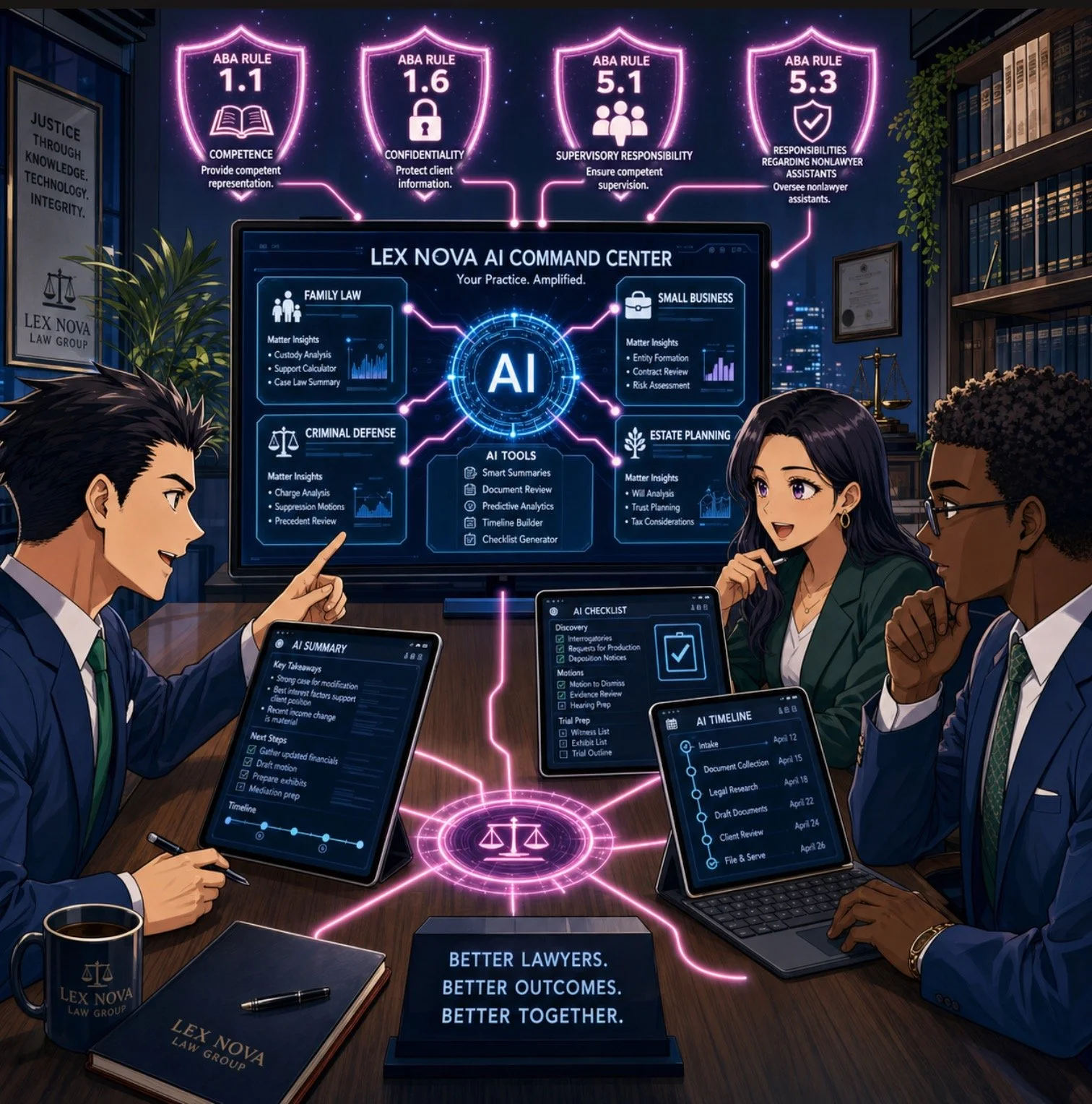

From a legal ethics perspective, this isn’t just an interesting cybersecurity anecdote. ABA Model Rule 1.1 on competence—especially Comment 8—requires you to stay abreast of “the benefits and risks associated with relevant technology.” An environment where your client can be tricked into paying a fake ransom, or where your own voice can be cloned to mislead staff or opposing parties, is very much “relevant technology.”

If you are not talking with clients and staff about AI‑driven fraud risk, you are not just missing a teaching moment—you may be edging toward a competence problem under the Model Rules.

Lessons for Client Counseling: Safe Words, Verification, and Panic‑Proof Plans 🛟

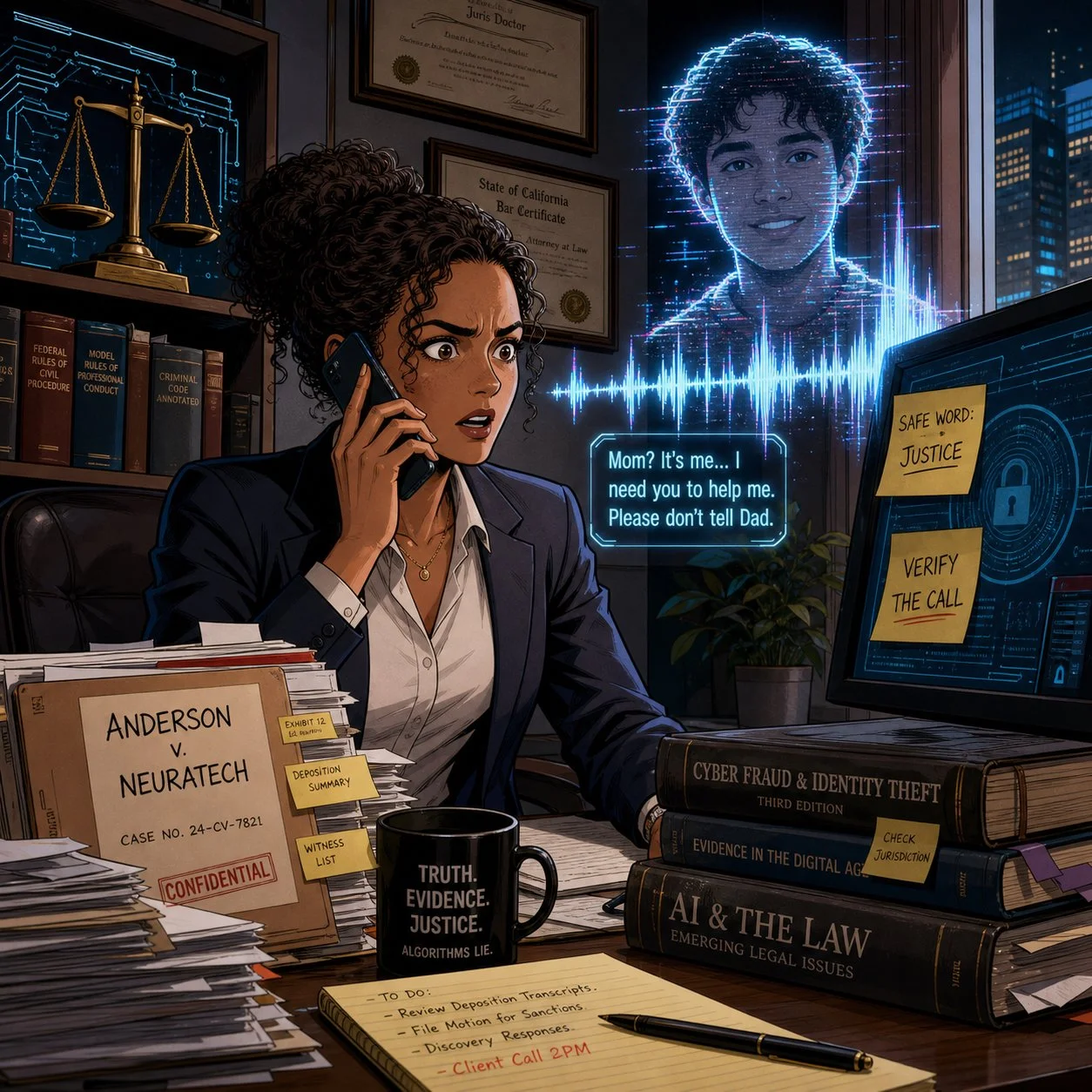

One of the most practical takeaways in the AI voice cloning episode is also one of the simplest: set a family and a seperate law office “safe word” and rehearse how to verify calls under extreme stress. The FBI, National Cybersecurity Alliance, and digital forensics experts interviewed for the episode all echo the same theme—pre‑commitment beats improvisation when panic hits.

This is precisely the kind of low‑tech, high‑impact advice lawyers can—and should—be giving in client counseling sessions, especially with:

Family law clients dealing with high‑conflict co‑parenting or domestic violence

Estate planning clients with vulnerable or elderly relatives

Business clients whose executives or finance staff could be targeted by “CEO voice” scams

Here’s a concrete, lawyer‑friendly checklist you can adapt:

Safe Word Policy

Encourage clients to adopt a family or organizational safe word, shared only in person or via secure channels, for any call alleging an emergency or ransom demand.Verification Protocols

Teach clients to verify via a second channel: call back on a known number, text from another device, or contact a third person who can physically locate the supposed victim.Call 911 First When in Doubt

Emphasize that if they believe a life is at risk, they should call 911—even if they suspect it might be a scam. Law enforcement can help triage the situation; if it’s a scam, they can sort that out after.Evidence Preservation

Tell clients to screenshot call logs, save audio, and preserve any “proof of life” photos or messages before they disappear, as some software can make photos exist only for seconds. Those artifacts can be invaluable if law enforcement or insurers later investigate.

This kind of counseling fits squarely within ABA Model Rule 2.1 (Advisor), which encourages lawyers to consider “moral, economic, social, and political factors” in advising clients. You’re not just parsing statutes; you’re helping clients design their own risk‑management frameworks in a world where even their senses can be hacked.

The second Crime Junkie episode I wanted to share, "WARNING: Online Scams", focused on other kinds of scams involving technology:

How Scammers Use Our Systems Against Us: Fake Warrants, Bitcoin Bail, and “Officer Smith” 👮♂️💸

Lawyers, are you prepared to advise your client on ai scams?

A couple receives a voicemail from what appears to be their local sheriff’s office, learns there’s a warrant for missing jury duty, and is told they can avoid booking if they pre‑pay bail via Bitcoin and Venmo. They do their homework—they verify the number online, they look up “Officer Smith,” they cross‑check the department. Yet they still end up running between ATMs, feeding money into a Bitcoin kiosk, and nervously wiring funds to what looks like a legitimate bail account.

Only later, after calling a non‑emergency line and getting a return call from a blocked number (as their real department actually uses [versus the scammer’s phone number that appeared on their caller ID), do they learn the uncomfortable truth: the “bail by Bitcoin” story was a scam.

Crime Junkie does an excellent job breaking the lessons down into clear rules:

Police will not call to give you a “heads‑up” that you’ve broken the law.

Bail is paid in person, not by Bitcoin, gift card, or Venmo.

Hanging up and calling back on a separately verified number can serve as an important safety/security step.

For lawyers, these stories are a vivid reminder that many scams are “legal‑adjacent”—they borrow just enough from real procedures (jury duty, warrants, bail, sheriff’s offices) to feel legitimate. That makes them particularly dangerous for our clients and our staff, who may over‑defer to anything with a whiff of authority.

Under ABA Model Rule 5.3, lawyers have an obligation to ensure that nonlawyer assistants act in a manner compatible with the lawyer’s professional obligations. That includes training staff to handle legal‑sounding calls skeptically: to question unusual payment methods, verify claims through known channels, and escalate suspicious calls before anyone withdraws or wires funds.

If your receptionist or office manager wouldn’t know how to respond to a call like the one just described, that’s a training gap you can fix—ideally before it becomes a loss.

Fraud in the Grey Zones: Sugar Daddies, Freelance Gigs, and Client Shame 🧾

Crime Junkie also covers scams that operate in more personal and sometimes stigmatized spaces: sugar‑daddy arrangements gone wrong; freelance “job offers” that rely on fraudulent checks; supposed production gigs that pay you to buy equipment, then claw back your real money once the check bounces. These scams involve computers, phones, the World Wide Web, and even an electronically altered check

In the sugar‑daddy story, a young woman on a sugar‑daddy online platform is manipulated into buying hundreds of dollars’ worth of Steam gift cards to “prove” she’s not scamming her would‑be benefactor, only to realize too late that she’s been exploited. In the job offer story, a freelance audio professional is mailed a check to buy gear for a production; he wisely flags the check, closes his account, and discovers that the job posting piggybacked on a real company’s identity.

Three legal practice lessons stand out here:

lawyers and their clients can learn a lot from shows like crime junkie about ai scams and their impact on their clients!

Clients may not tell you everything, especially if the scam involves sex, money, or perceived “stupidity.” The victims in these cases describe deep embarrassment and shame, which initially kept them from reporting to the police. For lawyers, this kind of hesitation could cause further bar issues beyond the incident itself.

Financial exploitation often intersects with the kinds of matters solos and small firms already handle. Think consumer protection, elder law, family law, or small business disputes. Clients who’ve been scammed may appear with half‑formed stories, partial evidence, and a strong desire to move on rather than report.

Failing to respond promptly—or at all—to suspected scams or financial exploitation can compound the harm and create independent ethics problems. When a lawyer ignores red flags, delays advising the client, or fails to investigate and remediate potential trust‑account or fraud issues, regulators may view that as a separate violation of duties of competence, diligence, communication, and safeguarding client property, even if the underlying scam originated outside the firm. In extreme cases, a pattern of slow or inadequate responses can trigger bar complaints or disciplinary investigations that focus less on the initial scam and more on the lawyer’s failure to act once on notice.

ABA Model Rule 1.4 (Communication) and 1.14 (Client with Diminished Capacity) come into play here. You must explain matters to clients in a way they can understand, but you also need to create a space where they can safely share how they were targeted without fear of ridicule. That’s emotional work, not just analytical work.

One practical move: incorporate scam‑screening questions into your intake forms and interviews. Ask clients explicitly whether anyone has recently requested unusual payment methods, impersonated a government agency, or pressured them to act quickly under threat of legal or physical harm.

Firm‑Level Risk: Deepfakes, Staff Training, and Incident Response 🏢🔐

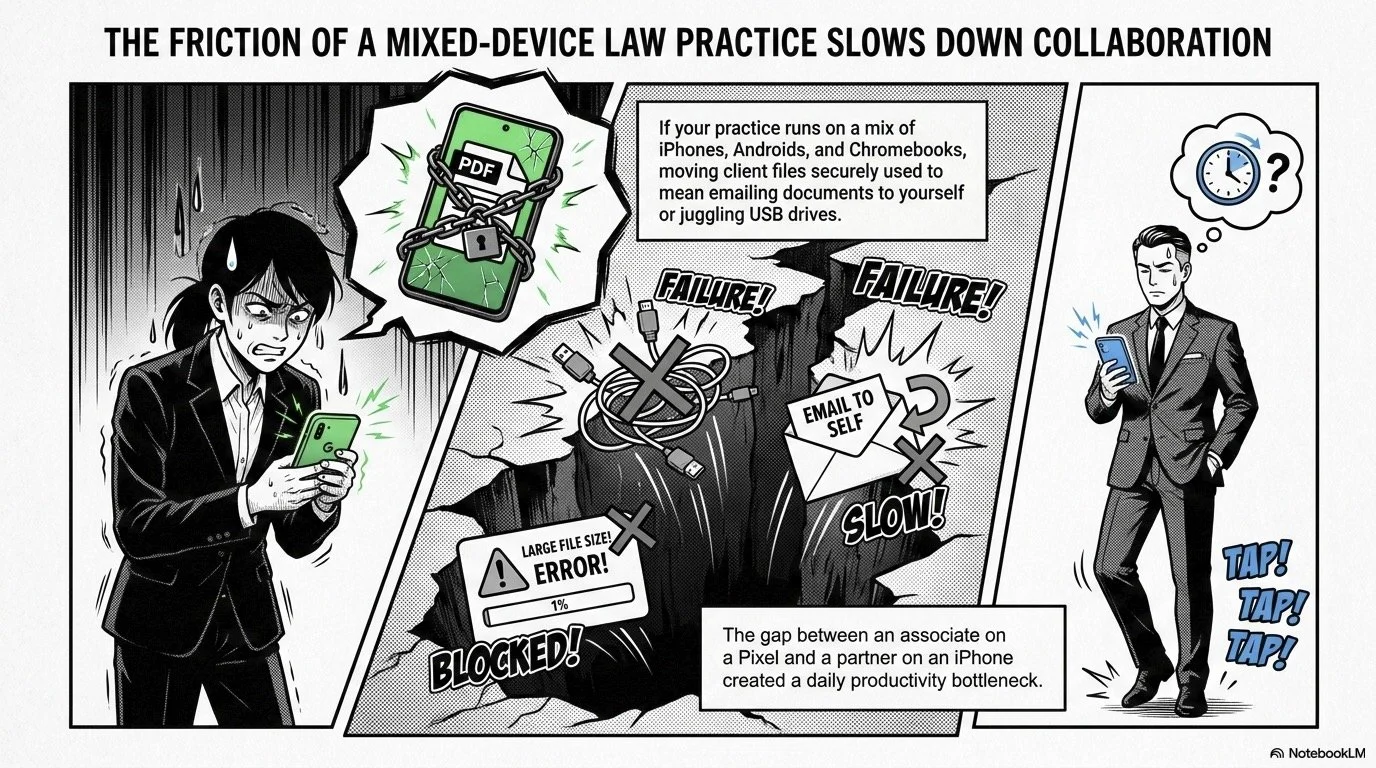

These Crime Junkie episodes also raise uncomfortable questions about law firm operations. What happens when it’s not a client but you whose voice is cloned? What if a deepfake of your voice instructs staff to release trust funds or share confidential documents?

In “WARNING: AI Voice Cloning and Virtual Kidnappings,” the FBI describes how scammers run these operations like call centers, constantly cycling through numbers and scripts to maximize success. The same industrialization is happening in business email compromise (BEC) and invoice fraud—areas where law firms are already prime targets.

Three concrete actions you can take at the firm level:

Adopt a “trust but verify” rule for any out‑of‑band instruction involving money or confidential data. No transfer of client funds, no disbursement of settlement proceeds, and no release of sensitive documents should happen based on a single phone call, even if the caller “sounds” like you.

Implement multi‑factor workflows, not just multi‑factor authentication. For example, any financial instruction must be confirmed via a second channel (secure client portal, verified email, or in‑person) before action.

Document an incident response plan that includes deepfake and scam scenarios. ABA Model Rules 1.6 (Confidentiality) and 5.1 (Responsibilities of Partners and Supervisory Lawyers) expect you to have reasonable safeguards and supervisory structures. That includes knowing what to do when—not if—your systems or people are tested.

These are precisely the kinds of measures we walk through in The Tech-Savvy Lawyer.Page blog and podcast episodes on AI, deepfakes, and metadata—where we discuss the intersection of ethics, evidence, and emerging tech.

Bridging Crime Junkie and Legal Ethics: Story as a Compliance Tool 📚✨

lawyers need TO think calmly when confronted with ai scams let alone any scam!

One of the most useful things about Crime Junkie is that Ashley and Brit don’t just scare you; they give you scripts, safe‑word strategies, and “here’s what to do next” checklists. Lawyers can—and should—borrow that model.

Instead of sending clients dense policy memos, consider:

Sharing these specific episodes with a short email explaining why they matter:

“WARNING: AI Voice Cloning and Virtual Kidnappings” – Crime Junkie’s breakdown of how cloned voices fuel virtual kidnapping scams and what the FBI recommends.

“WARNING: Online Scams”, the online scams episode about fake warrants, sugar daddies, job scams, and fraudulent checks.

Pairing the episode with your own one‑page client guide that translates the stories into local, practical legal advice—how your jurisdiction handles actual warrants, how bail really works, and how you want clients to contact you if they suspect a scam.

Integrating these stories into CLEs and staff training, using them as case studies for ABA Model Rule 1.1 (Competence), 1.6 (Confidentiality), 1.4 (Communication), and 5.3 (Nonlawyer Assistants).

The goal isn’t to turn your practice into a true crime podcast. It’s about leveraging narratives your clients and staff will actually remember when the phone rings, the voice shakes, and the clock starts ticking.

Lawyers in words, facts, and rules. But in an era of AI voice cloning, deepfake fraud, and industrialized scamming, the difference between a near‑miss and a catastrophe may come down to whether your clients have heard the right story—and practiced the right response—before the crisis hits.

So grab your headphones, queue up Crime Junkie, and then bring those lessons into your practice. Your clients, your firm, and yes, you, will be safer for it. 🎧⚖️